User dashboards & admin logins

Demo flows look fine until the second user shows up — and there is no admin panel, role separation, or password reset path.

AI Project Scoper turns rough ideas into project instructions Claude Code and Codex can actually follow — so non-developers stop getting stuck at 90%.

Built live with developer Brandon and a room full of AI consultants who were tired of the last 10% falling apart.

Students on the call kept describing the same pattern: an app feels almost finished, then collapses on the foundational pieces nobody planned at the start. Same six bombs, every time.

Demo flows look fine until the second user shows up — and there is no admin panel, role separation, or password reset path.

Built for one person and bolted onto a team later. Now every screen leaks data it should not, and rebuilding access control is a rewrite.

No one defined what private data looks like in this app, so the AI made reasonable defaults. That is not the same as secure.

Every prompt nudges the project. By prompt 40, the app barely resembles the original idea, and nobody can say what changed.

Code runs on the laptop and dies in the cloud. There is no checklist for env vars, secrets, build steps, or hosting choices.

"Done" is whatever the last screen looks like. Without explicit criteria up front, you cannot tell the AI it's wrong — only that it is different.

This was a training call inside the Agentic AI Certified Consultant Program. Jeff asked a simple question — and the room agreed faster than a vote usually moves.

The answer came back almost unanimous. Students wanted help getting reliable projects out of Claude Code and Codex. They were sick of the back-and-forth juggling act, sick of getting to 90% and watching it crack.

No name yet. No landing page. Just the realization that the missing layer was scoping — the foundational decisions everyone was skipping before the first prompt. The audience watched the idea form in real time.

Brandon shared the real frameworks he uses on production work: scaffolding, structured to-do lists, project instructions, and the habit of having coding agents maintain a living project wiki as they build. Documentation updates as work happens, not after.

Real planning files. Real to-do structures. Real deployment notes. Not theory — the way a senior developer actually shapes a project so an agent does not get lost.

The room watched a tool go from "we should fix this" to "this exists." That is what gave AIProjectScoper.com its DNA — Brandon's developer instincts, packaged so a non-developer can answer questions in plain English and walk away with a real scaffold.

This is the path AI Project Scoper walks you through. Each step is where vibe-coders normally skip — and where projects normally break.

Rough description in plain English.

AI asks the questions you'd skip.

Stack, auth, hosting, data model.

A real to-do list, not a vibe.

How it actually goes live.

Living docs the agent maintains.

One file you hand to the agent.

You start with a rough idea. AI Project Scoper interviews you the way a senior developer would interview a client at the kickoff meeting. Stack choices, auth model, who logs in, what data is sensitive, where it deploys.

If a question gets too technical or you don't know the answer, the tool explains the concept and recommends what you should choose for your project. The point is to remove the "I have no idea what that means" wall — not to make you sound like a dev.

When the interview is done, you get a zip file. Project documents already filled in. Tasks already structured. Deployment already drafted. A wiki the agent will keep updating as it builds.

Visit AI Project Scoper →Drop the zip into a new repo, then tell Claude Code or Codex: review project instructions in the zip file.

The fastest way to ship something real with Claude Code or Codex is to plan the last 10% before the first prompt — not after the project is on fire.

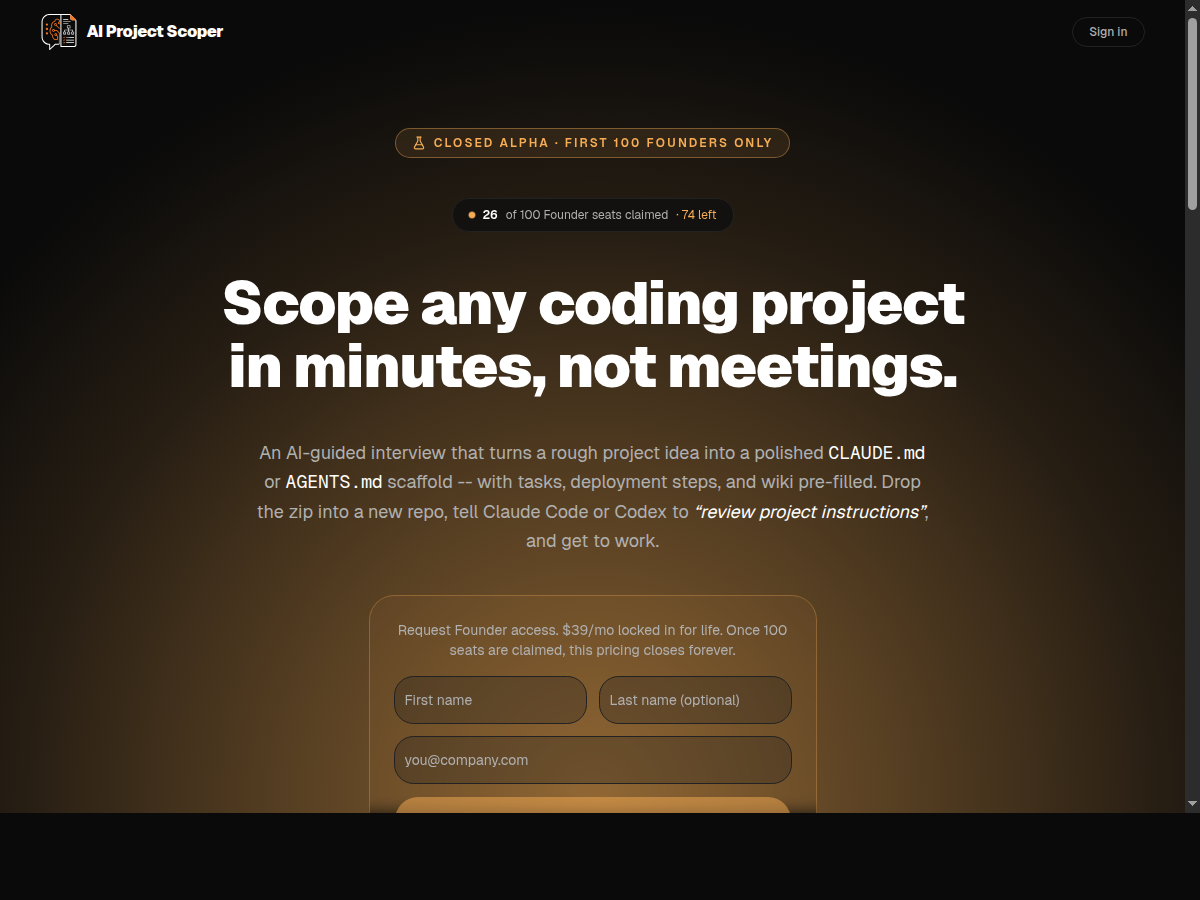

A real screenshot — not a mockup. Closed-alpha founder access was visible at the time of capture; pricing and seat count on the live site may change.

Examples shown on the live site: SaaS web apps, internal dashboards, Chrome extensions, API backends, MCP servers & AI agents, mobile apps, CLI tools, e-commerce storefronts, client portals with auth, learning & member sites, browser games, and Slack/Discord bots.

Claude Code projects get a CLAUDE.md. Codex projects get an AGENTS.md. Running both? You can get both, structured for each agent.

Tasks are sequenced so foundational pieces — auth, permissions, data boundaries — land first, instead of being patched at 90%.

A docs/deployment.md drafted up front means you ship to a real environment instead of debugging the cloud at 11pm.

You have ideas and a Claude or Codex subscription. You don't need to fake being a developer to ship.

Inside the Agentic AI Certified Consultant Program — or running your own client engagements — and tired of the 90% wall.

You scope client work fast. A repeatable interview that produces real project documents saves your senior people for what only they can do.

You ship offers, tools, and internal apps. Scope first, then let the agent build, then deploy with the doc you already wrote.

You paid for an "AI build" that hit 90%. You want the foundation right next time so the last 10% doesn't take ten weeks.

The fastest projects start the same way the most expensive ones do: with a senior developer asking the right questions before any code gets written. AI Project Scoper bottles that, in plain English, for non-developers.

Want the rest of Jeff's field notes? Read the Scale with Stability launch day story, or the GPT-5.5 OpenClaw operator brief.

VA Staffer AI Employees take ideas from rough to public — scoping with tools like AIProjectScoper.com, building the assets, deploying the page, and keeping the wiki current as the project evolves.

Beau turns live-call moments, founder field notes, and new tools into public-facing pages — usually before the room cools off.